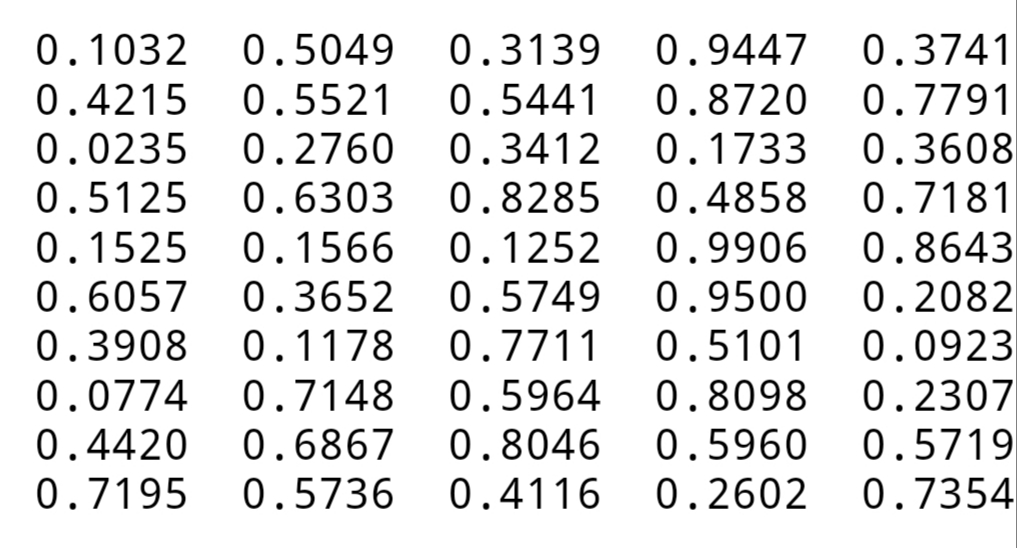

I'm pretty sure this is not quite right as I've stated it because the 0 index (1) is not obtainable from the first choice and I cannot quickly see an alternative 1.50 + 1.50 adjustment that produces 0.99. This "pushes" the peak in the RandNum50() + RandNum50() distribution forward uniformly. I haven't done the analysis to confirm how uniform (or not) this would be, and it could be adjusted to be a true shuffle, but could you just choose, from a starting array of the ith index = i + 1, the (k + RandNum50() + RandNum50() - 1) mod (100 - k) index, with removal, for k = 0.99? Then with this value, you can do the factorial base expansion as described in the post. Or, as Vor suggests, you can reject if $P>n$. This value is not completely random, but it is a measure of uniformity that is often used. Then simply truncate $P$, i.e., $Q=P\mod n$, in your case $n=100!$.

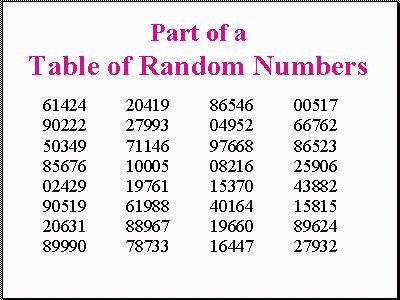

If you do not know how to generate a uniform, as suggested in that post, from a random bit, you could also generate an approximation of the uniform directly, in this way (which is equivalent to Vor's "trulyrand", but faster): P = (RandNum50()-1) + (RandNum50()-1)*50^1 + (RandNum50()-1)*50^2 +.

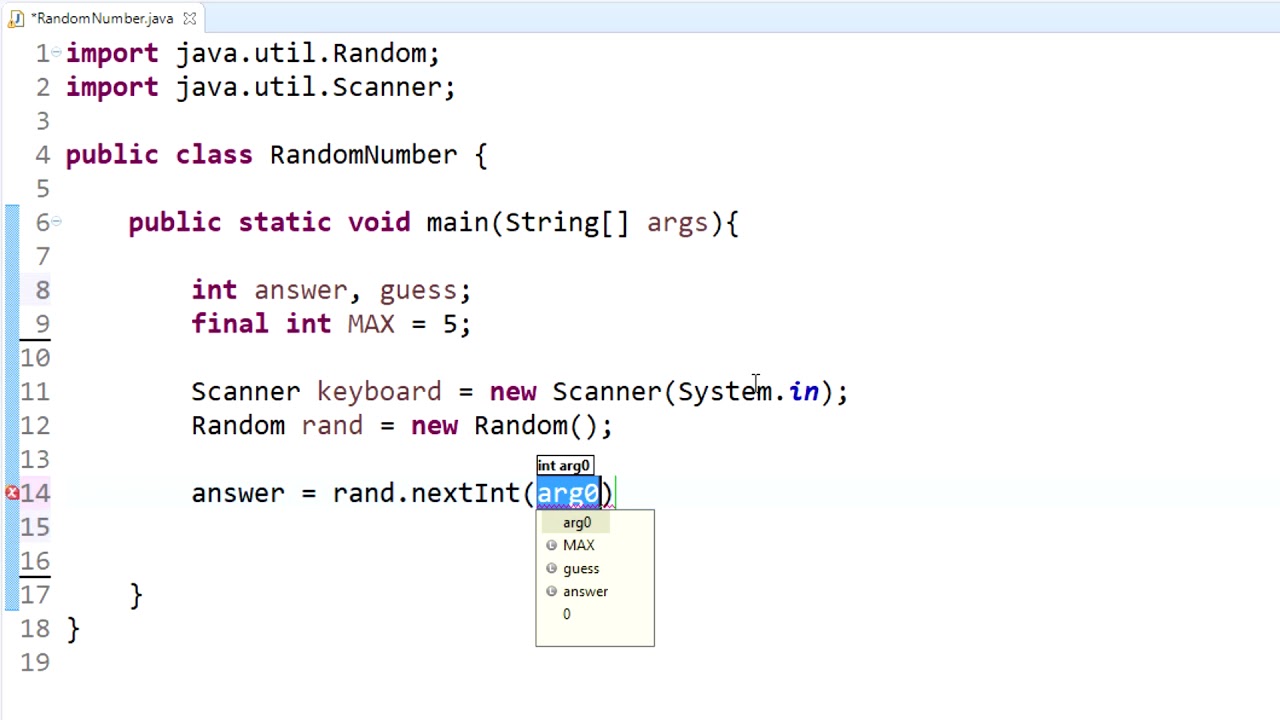

This is actually, as I mention in the post, the topic of a paper I have submitted! The basic idea is that you save a lot of bits if you generate a uniform between $1$ and $n!$, and then using factorial base decomposition, instead of generating a sequence of uniforms ranged up to $1$, then $2$, then $3$, etc., $n$. The complexity is exactly $n\log n + O(1)$ in calls to RandNum50 and is described in some detail here, using as a source of random bit (as suggested by Vor): if ( rand50() > 25 ) then b = 1 else b = 0 // random bit This means that no possible distribution of outcomes to random-number calls can produce a uniform permutation. There are other methods that can be used to get a better (arbitrarily better) and faster approximation but (up to my knowledge) the only way to get a truly uniform distribution is to use the rejection sampling: pick $m = \lceil \log_2(k) \rceil$ random bits and if the number $r$ obtained is less than $k$ return it, otherwise generate another random number a possible implementation: function trulyrand(k) $ can't be of this form, because $100!$ doesn't divide $50^k$ for any $k$ (for instance, 3 divides $100!$ but can't divide any number of the form $50^k$). The Fisher-Yates algorithm becomes: arr : arrayįor i = 0 to 99 do arr = i+1 // store 1.100 in the arrayĪs pointed out by Erick the krand function above doesn't return a truly uniform distribution. using a uniform random generator in įor i = 1 to k do sum = sum + RandNum50() - 1 In order to keep uniform distribution with good approximation (see EDIT section below) at every iteration you can use this trick to produce a value krand between $0$ and $k-1$: // return a random number in with uniform distribution I thought (so it can be wrong :-) of this $O(N^2)$ solution that uses the Fisher-Yates shuffle.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed